Measuring the business impact on learning in 2022

)

Here is an extract from Watershed and LEO Learning’s sixth annual report, which investigates how organizations regard, apply and innovate upon the measurement of their learning and development work.

Key lessons from the measurement of the ‘haves’ and ‘have-nots’ of learning

Despite virtually everyone wants to prove that their L&D efforts have a meaningful impact, only a quarter say they work for organizations that allocate budget towards finding that proof. Well-budgeted teams are twice as likely to feel pressure from the executive level, and evidence points to them being more agile in our ongoing age of disruption.

This year’s expanded survey tells the story of the haves and have-nots of learning measurement. 64% of organizations with budget set aside for measurement emphatically believe that it’s possible to prove learning’s impact, and 42% of them doubled down on measurement and strategy in the last year. These organizations lead the way in measuring to improve rather than simply to prove effectiveness, and their journeys provide plenty of inspiration on how to get started — and how to continue to scale and sustain.

6 years of desire to measure, but is there enough action?

For several years now, the survey has given an impression of a significant gap between the widespread desire to measure and a lack of plans and actions made towards measurement. The extra lines of questioning added in this year’s survey have brought much-needed context, making it easier to identify the major barriers that measurement teams are challenged by — and make recommendations for overcoming them.

In the last five surveys, at least 90% of respondents have stated that they strongly agree or agree with the statement ‘I want to measure the business impact of learning programs’. This year’s survey is no exception, with 94% expressing some level of agreement. 88% of respondents similarly agree with the statement ‘I believe it’s possible to demonstrate learning’s impact’.

Despite these positive statements, key measures of demand and action on measurement fell this year. Particularly, executive pressure to measure—which we once hailed as ‘almost inescapable’—is now felt by only 50% of respondents, at its lowest point for five years (and representing the largest single year-on-year percentage fall for any response in the survey).

Business impact vs learning satisfaction

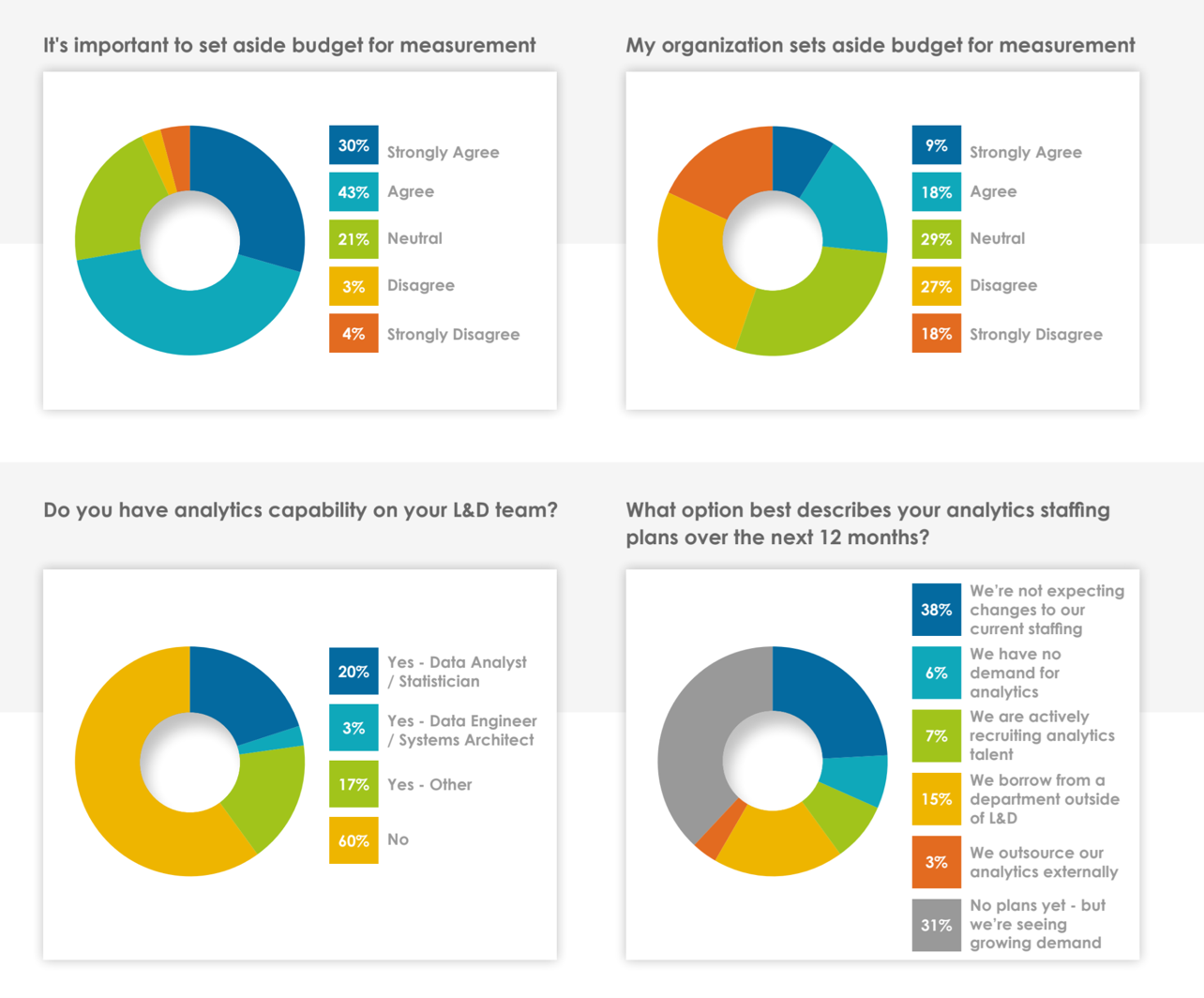

Likewise, our new questioning uncovered the fact that 60% of respondents do not currently have analytics capability on their L&D team and, of these, 47.6% of respondents had no plan to make analytics-related staffing changes over the next 12 months.

Other observations, such as the way that relatively basic measures based on learner satisfaction remain the main way that departmental success is evaluated (32% of total), paint a less than dynamic picture. Our preferred measures would be based on organizational impact and improvements in job performance – learner satisfaction is only really valuable if properly contextualized alongside data points such as retention rates and/or development-related sentiment in pulse surveys.

Our new data around budget helps to better contextualize this apparent gap in intent and action. 73% of respondents agree with the principle that it’s important to set aside budget for measurement. However, only 27% agree that their organization actually does so. As we may reasonably expect (and as we go on to demonstrate below), the 27% in these well-budgeted organizations are far more likely to be well-resourced for learning measurement and to state that they’re confident they’re seeing results.

The biggest barrier to measurement

It would, however, be a mistake to characterize lack of investment as the sole barrier to measurement. ‘Competing priorities’ once again tops the list of biggest challenges selected by respondents, up to 41% this year from 33% in 2021. Though a smaller 15% selected ‘don’t know how to start’, there is undeniably a sense that both the market and methods of measurement can appear intimidating at first glance — a clear challenge for learning professionals to overcome.

This narrative ties in with the collective view across LEO Learning, Watershed, and the wider Learning Technologies Group from working with thousands of leaders and organizations in the learning space. The segmentation the expanded survey allows has confirmed to us that while there are those organizations yet to make a start, that are at risk of being left behind, there are plenty of those who are committed to the measurement journey, who are seeing results, and who have proven more agile in a crisis.

The Haves and the Have-nots

This year’s survey saw the addition of four new questions regarding:

• Whether it’s seen as important to set aside budget for measurement

• Whether organizations are setting aside that budget

• The measurement capability inside the respondent’s organization

• The organization’s plans for measurement-related staffing in the near future

On their own, these questions offered us a flavor of both organizational budget and capability. However, arguably their greater value has come from the segmentation they allow with all other questions in the survey. Particularly, whether organizations actually have budget for measurement available correlates strongly with certain viewpoints, and with overall progress on the measurement journey.x

As the analysis took shape, we started to see an emerging story of the haves and the have-nots. While 73% of respondents believe organizations should set aside budget for measurement, just 26.6% are in organizations that currently do this. Of this well-budgeted 26.6%, 58% enjoy access to analytics expertise on their L&D team (compared with 28.3% on teams without a budget). These figures suggest the greater level of ready access to data and staffing that well-budgeted teams enjoy.

Our ‘haves’ don’t just benefit from data and staffing — they also enjoy a far greater level of confidence that the work is possible. 93.8% of organizations with budget set aside for measurement agree or strongly agree with the statement ‘I believe it is possible to prove learning’s impact.’ It’s an emphatic statement of confidence — strong agreement specifically is at 64% (compared with just 42.2% in unbudgeted teams).

By contrast, we may characterize our ‘have-nots’ as being in a rather tough spot. They’re left without any investment, without any staff, hoping for a silver bullet solution. In addition to being faced with the same competing priorities as everyone else, 17% of them don’t actually know where to start. A situation that is understandable, given the complexity of the work.

However, the progress and apparent confidence of well-budgeted teams that can and do measure leaves little room for hesitation. In our own experience, these teams are quickly finding this kind of data and analytics work indispensable: it becomes critical to the way they make future decisions.

Or as Bonnie Beresford, Senior Director, Performance and Learning Analytics at GP Strategies, puts it: “At their level, they’re less concerned about simply proving learning effectiveness. They’re able to instead focus on improving. It’s all about making data-driven decisions to allocate (or re-allocate) your L&D budget to where it will have the greatest strategic impact.”

Thankfully the journeys of the ‘haves’ provide plenty of inspiration on how to get started continue to grow.

David Ells

CEO, Watershed

Piers Lea

Chief Strategy Officer, Learning Technologies Group

Download a free copy of the report: Measuring the Business Impact of Learning in 2022

)

)

)

)

)

)

)

)

)

)

)

)

)

)

)

)

)

)

)

)

)

)

)

)

)

)

)

)

)

)

)

)

)

)

)

)

)

)

)

)

)

)

)

)

)

)

)

)

)

)

)